DiffEdit - Semantic Image Editing with Stable Diffusion

by John Robinson @johnrobinsnThis notebook implements the paper, "DIFFEDIT: DIFFUSION-BASED SEMANTIC IMAGE EDITING WITH MASK GUIDANCE".

Diffusion models have revolutionized the space of generative models for many domains, but most notably for image generation. Not only can you generate amazing images given a random seed and a text prompt to guide the diffusion. But you can also "edit" or modify the images in a targeted way using inpainting techniques. Most of these inpainting techniques require a binarized mask to isolate changes and while generating these masks is straightforward it can be quite labor intensive to create them.

This paper describes an approach of generating a prompt-directed mask using the same diffusion model used to generate and inpaint the images, thereby leveraging the investment already made in training the diffusion model (ie Stable Diffusion in this case.) The DiffEdit algorithm takes as input an image and two prompts. The first prompt, reference prompt, describes the object currently present in the image to be "removed" or edited out. The second prompt, query prompt, describes the object that should take its place within the image. These two prompts are used to derive a binarized mask that can be used to inpaint (or "paint in") what is described by the query prompt.

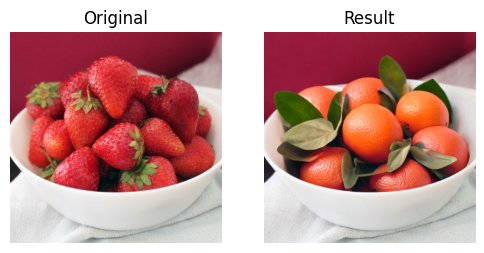

eg. "A Bowl of Strawberries" => "A Bowl of Oranges"

Stable Diffusion works by adding random gaussian noise to an image and iteratively across some number of timesteps uses a neural network to predict the amount of noise to remove from the noised image, eventually arriving at an image that has all of the noise removed. Since this is a stochastic process the image that we end up with won't necessarily be anything close to the image we started off with. This noise removal process can also be guided by a text prompt which will steer the denoising process.

DiffEdit leverages this denoising behavior to predict noise for both the reference prompt and the query prompt. With the observation that given the same random seed that the noise for areas of the image not being guided by the prompt will be very similar, whilst the predicted noise in areas being actively guided by the prompts will differ much more (different textures/colors etc). We can basically do a localized distance calculation to establish which areas should be modified based on comparing these noise values.

One detail that probably enables DiffEdit is that Stable Diffusion utilizes a Variational Auto Encoder (VAE) to compress the images into a latent representation. This has several benefits. One being that the input image dimensions of 512 by 512 (3 channel) pixels is compressed down to a (1,4,64,64) shaped tensor called a latent. The means that the model has to deal with a much smaller amount of data, which makes the model much more efficient and practical. But with respect to the DiffEdit algorithm, each 4-channel "pixel" of the latent representation encodes not only color data but also encodes textures spatially (8x8 patch). This greatly improves the contrast when the differencing operation is performed.

Continue on with this notebook to see my implementation and some demos of it in action.

Many thanks to the authors of the paper for their ideas and inspiration.

Also Special Thanks to Jeremy Howard for all of his great tips and techniques for reading and understanding academic papers that he shared in his From Deep Learning Foundations to Stable Diffusion Course

Click on one of these buttons to open the notebook.

Share on Twitter | Discuss on Twitter

John Robinson © 2022-2025